Table of contents

Let's be real — today's AI language models are awesome at understanding and generating fluent text. But they aren’t perfect.

Today, most agentic AI systems struggle with reasoning as they lack the depth of understanding and contextual awareness inherent to human cognition. For businesses looking to leverage cutting-edge AI solutions, such as our Copilot, these challenges can be a limiting factor.

While we do not have a holistic solution to this reasoning problem, we have taken steps towards what we believe to be the right direction: executing arbitrary code. Executing arbitrary code means a program can run any code it's given, regardless of who provides it. While this won’t solve all of the understanding or contextual problems that LLMs face, it does largely expand their capabilities.

This approach is not without its risks. Data theft, service disruptions, abuse by bad actors… the risks are very real. Introducing and executing arbitrary code in an enterprise environment is incredibly powerful but also frantically dangerous if not handled properly. Not only do we need to make sure this solution is properly sandboxed, we also need to continuously test it for correctness and ideally reduce chances of vulnerabilities and exploitation by any and all means necessary.

With this context in mind, this blog will cover:

- The security risks we must grapple with when attempting to boost AI’s capabilities

- Why code execution is a very promising path to enhancing language models’ skills

- The battle-tested methodologies — sandboxing, fuzz testing, and more — we employ to validate the security robustness of our approach, ensuring our Copilot's capabilities don't come at a cost

Executing arbitrary code is the right path forward.

We recognize that executing code offers a very promising path to unlocking LLMs full potential. While we could train a model, or build templates to slot the data into, code execution allows seamless integration with powerful established libraries and methods. Crucially, this approach future-proofs the solution by adapting to evolving needs without costly retraining cycles that would need to occur if we built a model.

At Moveworks, we made the strategic decision to rely on the LLM itself to generate code for performing complex operations, enhancing the capabilities of our Copilot. While not a silver bullet, this approach reduces the overall attack surface by constraining inputs to the LLM's paradigms.

However, executing third-party code brings security risks that cannot be ignored, especially for enterprise deployments. To navigate this challenge safely, our team implemented a six step hardening process:

Choosing the right language

Testing and limiting execution

Sandboxing for containment

Fuzzing the code

Reducing resource exhaustion risks

Opening testing to the community

By carefully balancing enablement and risk mitigation through this battle-tested methodology, enterprises can leverage AI's quantitative potential without compromising security or compliance requirements.

Choosing the right language

When dealing with security-critical code execution, how the language handles memory management is one of the first considerations. Non-memory safe languages introduce more opportunities for attackers to exploit vulnerabilities.

This risk is compounded by the fact that executing arbitrary code for compiled languages such as C also requires compilation, adding fragility due to the extra steps required before execution and potential issues with managing build artifacts. Even major web browsers are moving away from non-memory safe languages for security reasons. Python's memory-managed nature and robust security features made it a compelling choice for this use case.

Because of this, we landed on Python as the language of choice, which offered several additional benefits:

- Established in machine learning: Python has become the preferred language for machine learning and numerical computing thanks to libraries like NumPy, SciPy, and Pandas.

- Simplified execution model: As an interpreted language, Python code can execute directly without a compilation step.

- Engineer familiarity: Our engineers already read and write Python daily; making debugging of potential issues simpler

While no language is perfect, selecting Python allowed leveraging its strengths in memory safety, machine learning applications, and simplicity, enhancing LLM capabilities while taking security into consideration.

Testing and limiting execution capabilities

To prevent potential resource exhaustion or denial-of-service attacks from executed code, we implemented several key restrictions:

- Size limits: Appropriate caps were placed on the maximum sizes allowed for numerical classes like integers (4,294,967,296) and strings (4,096)

- Limited operations: Code execution is limited to 8,192 operations before being forcefully terminated.

- Time restrictions: 8.2 seconds is the maximum time allowed for any code to run.

- Reduced builtins: Standard Python builtins like print, import, and others were removed to restrict functionality.

- Customized builtins: Simplified Python builtins like range were re-implemented to enforce operational counting.

- Custom primitive types: We implemented our own primitive types instead of relying on the default Python types (Such as string, int, float, etc.)

Sandboxing for containment

Sandboxing refers to executing untrusted code within an isolated environment with restricted permissions and access. This approach provides several key security benefits:

- Permissions lockdown: The sandbox has significantly reduced permissions compared to the full system, limiting the potential blast radius of a compromise. For example, the sandbox does not have access to the Python namespace.

- Resource constraints: Sandboxes typically have access to fewer resources and services than the parent environment, such as not being able to access the network.

- Containment: After the code runs, the process and its sandbox is destroyed, significantly reducing the time attackers have to break out of it.

Even with LLM-generated code, the risks of compromise and potential misuse required additional sandboxing measures:

- Restricted operations: By parsing the code's Abstract Syntax Tree (AST), only a permissible set of operations (Such as addition, multiplication, sorting) are allowed to execute, preventing unauthorized network calls or malicious actions.

- Memory isolation: The code runs in a dedicated memory space and custom namespace, preventing access to global interpreter state or resources.

- Custom interpreter: A slimmed-down Python interpreter was implemented, removing builtin functions like print, import, and others, further limiting potential attack vectors.

- Reducing attack surface: Thanks to the solution's architecture, we also gain some benefits from query rewriting that makes it harder for attackers to control the output from the LLMs.

This multi-layered approach of LLM-generated code combined with sandboxing controls aims to minimize risks while still unlocking enhanced capabilities for enterprise LLM deployments.

The constraints mentioned above make it difficult for executed code to consume excessive resources or traverse paths leading to denial-of-service conditions. Combined with these sandboxing measures, the execution environment is hardened against potential abuse and misuse.

Testing the code with fuzzing

After manually testing the secure code execution environment, we still required more comprehensive validation given the high risks involved with running untrusted code. Fuzzing — the process of feeding randomly generated inputs to identify potential vulnerabilities — was employed using the powerful Domato fuzzer from Google Project Zero.

Domato generates millions of "random" Python programs based on provided grammars and templates. While originally designed for fuzzing browser DOMs, it was easily extended to fuzz the custom Python interpreter. After defining a Python grammar and providing sample code, Domato achieved full coverage of the new execution environment.

To date, over 100 million lines of Python code have been fuzzed running inside this sandboxed system — with one vulnerability discovered and remediated within the implemented security measures.

Opening testing to the community

While extensive internal testing and fuzzing was performed, the security community's scrutiny provides an invaluable opportunity to uncover potential blind spots.

As part of our Copilot’s release, we’ve launched a private bug bounty program on HackerOne, opening up the code execution environment for ethical hackers to probe and attempt bypassing its security controls.

Expanding testing beyond internal teams and leveraging the invaluable expertise of external security researchers is a critical step in establishing confidence and trust in the solution's resilience against real-world threats.

By taking these hardening steps, our Copilot can now reliably handle certain tasks that initially stumped language models.

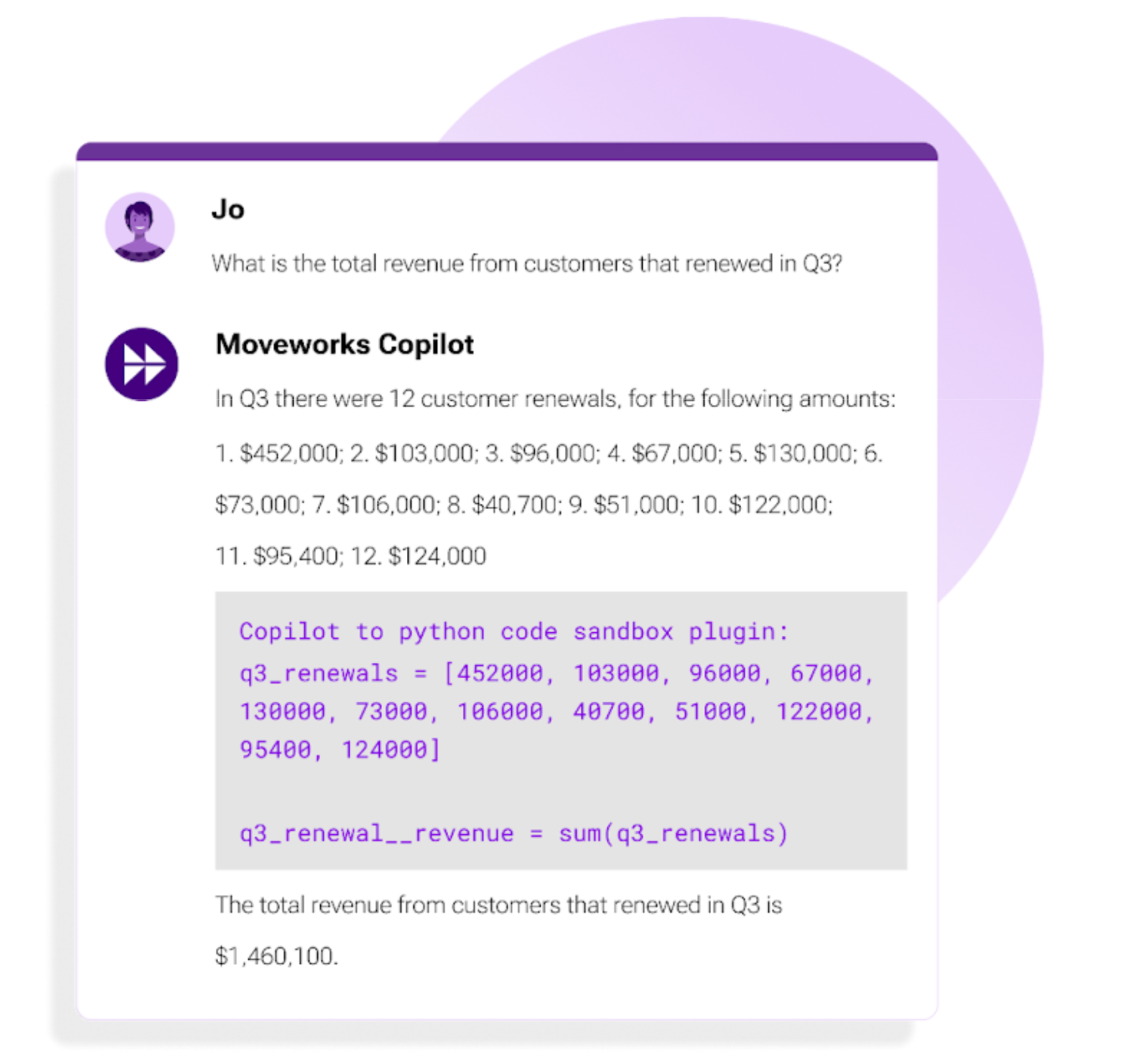

We asked our Copilot: "What is the total revenue from customers that renewed in Q3?"

In certain situations, our Copilot can parse and interpret numbers as true variables, not just text, helping the LLM to perform certain types of calculations.

As a result, the Copilot can produce the correct response of $1,460,100 for Q3 renewal revenue. All of this enhanced processing happens seamlessly under the hood in a matter of seconds.

With our secure code execution solution implemented, our Copilot processes the query, accurately calculating the total revenue from Q3 customer renewals as $1,460,100.

With our secure code execution solution implemented, our Copilot processes the query, accurately calculating the total revenue from Q3 customer renewals as $1,460,100.

Leveling up LLMs — without compromising security

For businesses looking to unlock the full potential of AI, overcoming its inherent limitations is critical. However, the straightforward solution of executing code to enhance AI’s abilities raises legitimate security concerns. Allowing arbitrary code execution is an unacceptable risk that could lead to data breaches, system disruption, and compliance nightmares.

This blog presents our approach to improving AI capabilities through secure, sandboxed code execution of LLM-generated code, allowing our Copilot to unlock AI's quantitative potential for enterprises. By carefully balancing enablement and risk mitigation, enterprises can leverage AI's quantitative potential while still adhering closely to security and compliance requirements.

From strategic technology choices like Python, to layered isolation techniques, to extensive security validation through fuzzing and bug bounties — this solution aims to be the secure path for enterprises looking to get the most out of AI.

This capability lays the groundwork for us to securely develop additional features that are reliant on computation based problem-solving skills.

As AI's impact grows, addressing gaps through secure, hardened methods will become table stakes. And for businesses betting on AI, our approach intends to max LLM capabilities and security in one package.

We would like to thank the individuals listed below for their invaluable feedback:

Zach Hoffman, Software Engineer, Machine Learning

Eric Gaudet, Software Engineer, Machine Learning

See how our AI Copilot can transform your enterprise workflows. Request a demo today.