Table of contents

The premise of a Slack channel is simple: strength in numbers. Over the last few years, IT teams have embraced employee-facing chat channels as an alternative way to provide support, since there’s a good chance that someone in the group is experiencing the same issue, or has created a workaround, or even knows the solution.

In reality, of course, these helpful messages will be mixed in with just as many unhelpful ones, meaning that service desk agents must read through each thread to determine the best course of action. It all amounts to lots of extra work for IT teams that are already overextended.

Now imagine the ideal team member in the IT support channel. They read every single message — day or night, weekend or holiday — and always respond when they can help. They go beyond giving advice; in many cases, they resolve the problem for you in seconds, without any effort from the IT team. Well, after extensive R&D and field testing, that ideal team member is ready for action. Introducing Moveworks’ Channel Resolver for Slack:

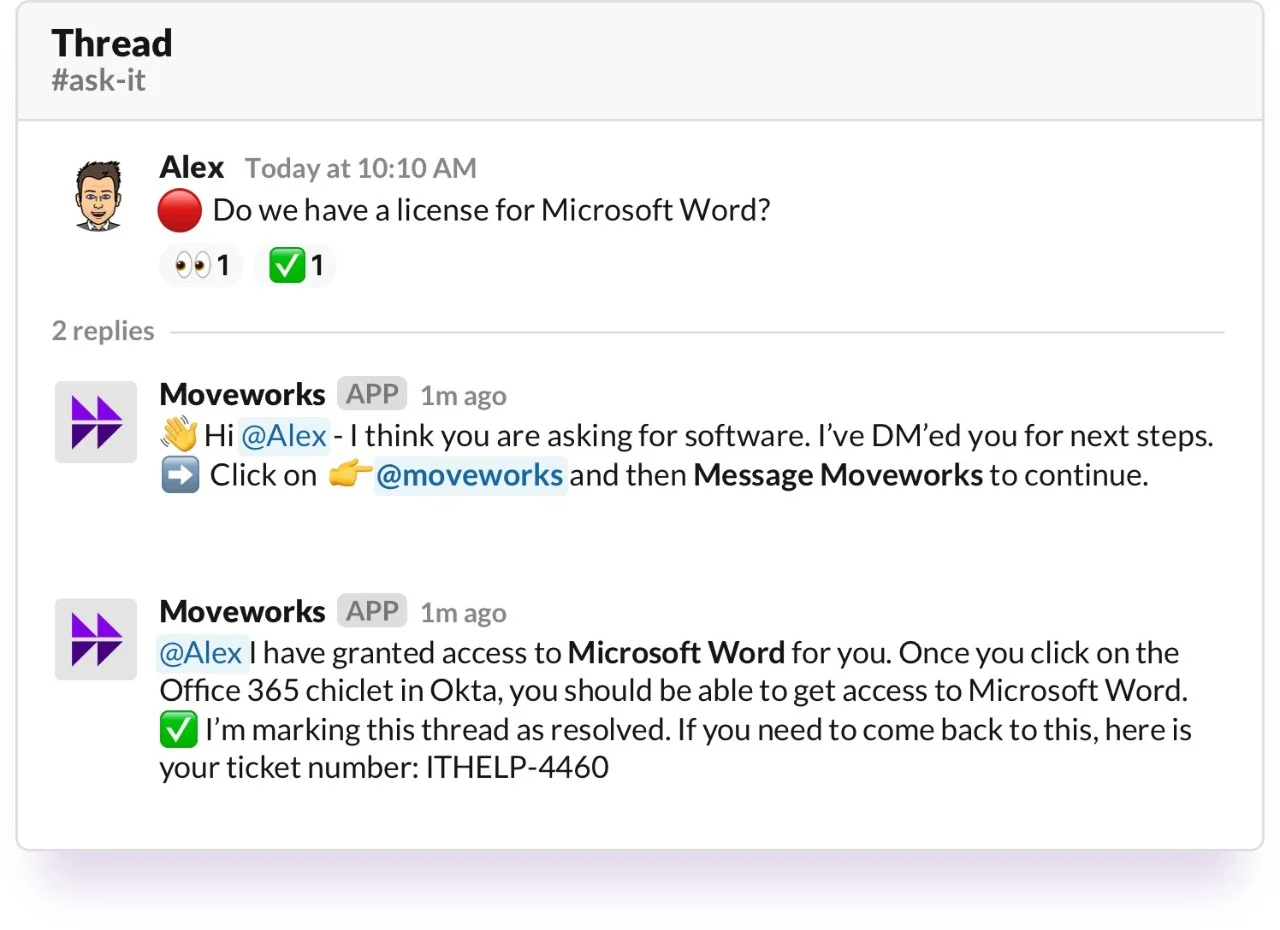

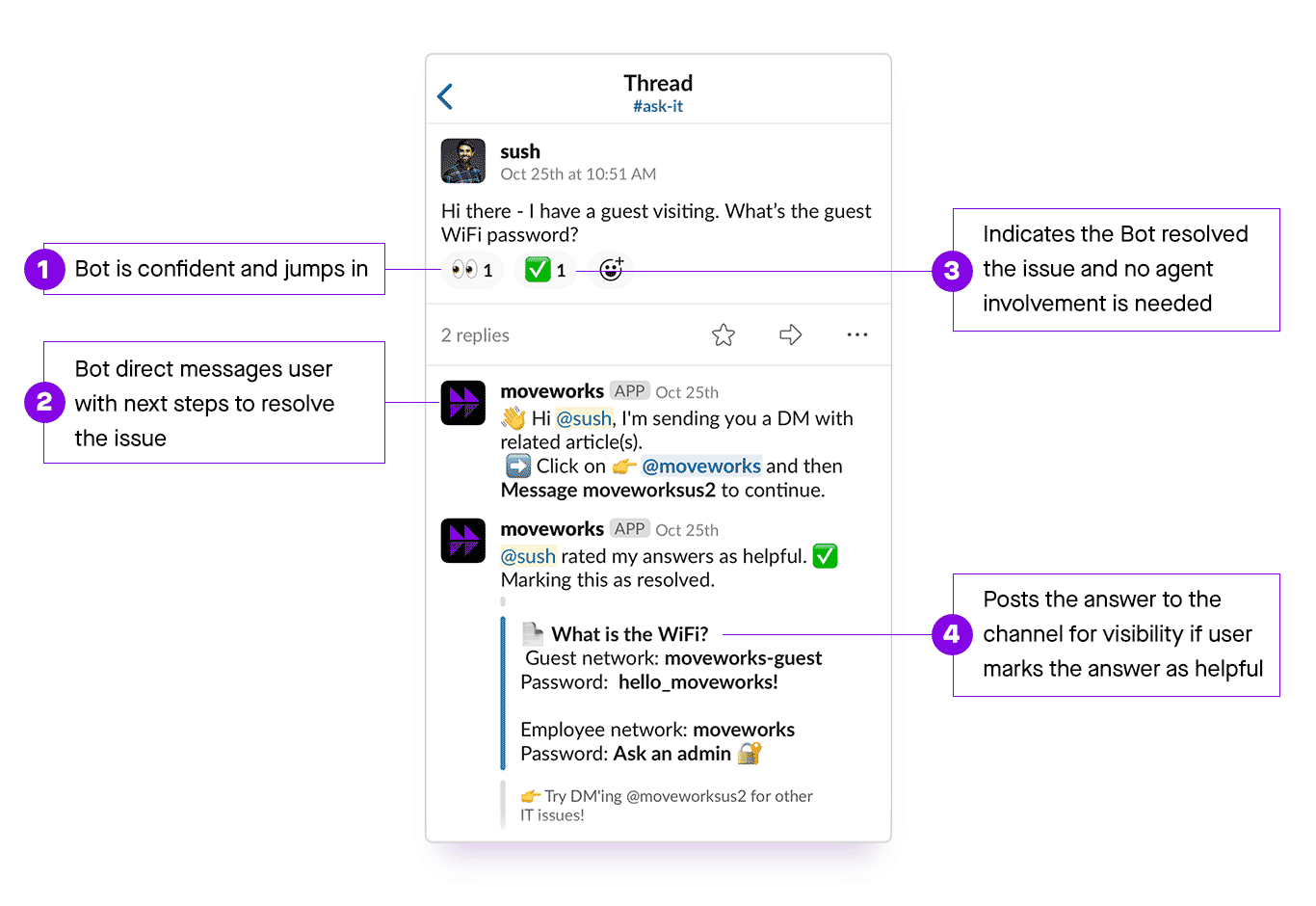

Figure 1: Channel Resolver messages the employee directly, then updates the channel.

Figure 1: Channel Resolver messages the employee directly, then updates the channel.

Supercharging IT support on Slack

Moveworks understands the challenges employees face when trying to get a support issue resolved — and how delays in resolution hinder their productivity. Our vision has always been to make it easy to solve employee issues through the use of natural language understanding and conversational AI: resolution should come instantly, no matter where those issues are reported. Channel Resolver represents a major step toward realizing that vision of frictionless IT support.

Available to all Moveworks customers who use Slack, Channel Resolver is a version of our AI bot that resides in a public chat channel. It analyzes each employee question, and if our natural language understanding (NLU) and resolution platform predicts that it can remediate the issue, it jumps in. In fact, an initial group of a dozen customers — including AppDynamics — have already transformed their IT support with Channel Resolver.

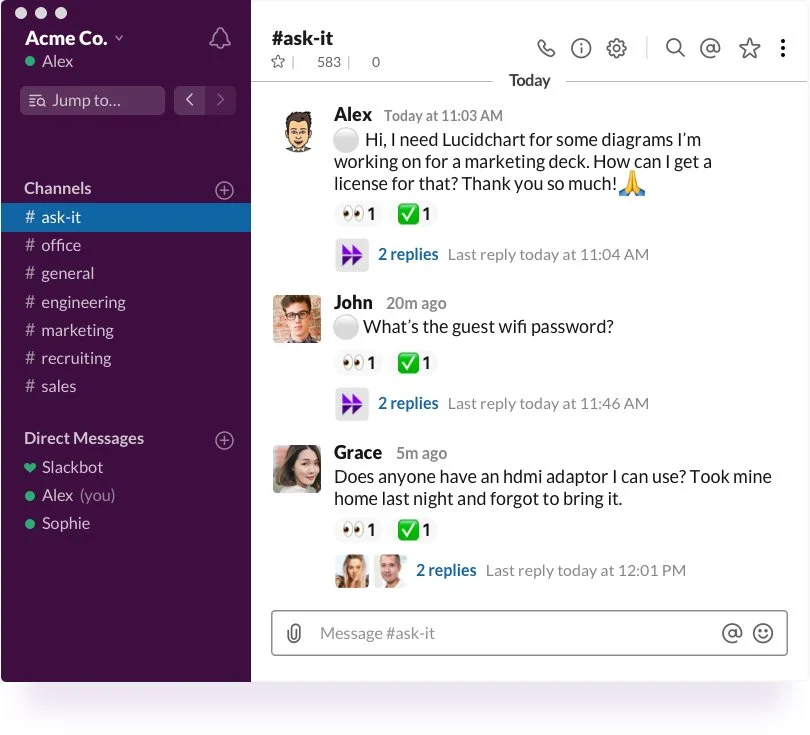

Unsurprisingly, the trend toward Slack channels extends across most enterprise workstreams, since they promote transparency and enable teams to benefit from the wisdom of the crowd. IT teams in particular have adopted this open communication approach, using a dedicated channel where employees can raise issues and get assistance. Such “Ask-IT” channels are becoming increasingly popular places for employees to raise common questions:

Figure 2: Using standard emojis, Channel Resolver indicates both that it’s analyzed an employee’s message (the eyes) and that the issue’s been resolved (the checkmark).

Figure 2: Using standard emojis, Channel Resolver indicates both that it’s analyzed an employee’s message (the eyes) and that the issue’s been resolved (the checkmark).

From a broader perspective, IT channels complement the modern, chat-first business strategy, which integrates formerly separate workflows on the chat tool that employees use every day. There’s no longer any need to log in to specialized tools like portals, as we explained in our post, "Anytime, anywhere, automatic." But resolving employees’ issues over chat also presents challenges for IT teams. How do you stay on top of so many questions and requests? And how do you respond fast enough that employees feel they're being heard?

We recognized that natural language understanding and conversational AI could address these challenges, and we built the Channel Resolver capability to do just that.

A new way to support employees

As a machine learning company, we learn a lot from data, which we augment with customer feedback from onsite usability studies, interviews, and pilot programs to bridge the gap between theory and reality. A recurring theme in our research and customer discussions was that, with company-wide IT channels exploding in popularity, keeping pace with requests in those channels was becoming impractical for IT support teams.

Our task, then, was to build a mechanism through which we could do the following:

- Use NLU to understand messages posted in the IT channel

- Resolve issues autonomously whenever feasible

- Adhere to the unique conventions and processes of in-channel support

- Hand issues off to agents if necessary

This would all have to be done with extremely high precision because, when you make a mistake in a public channel, everybody can see it. And at the same time, we would have to maintain high coverage — the percentage of requests handled by the bot — to ensure issues wouldn’t pile up in IT agents’ queues. Needless to say, building this capability is not trivial. Below, we explore the reasons why supporting channels is even harder than supporting requests that come in via direct message (DM).

Separating the signal from the noise

Channels are significantly “noisier” than DMs or emails: they contain broadcast announcements from service desk agents, non-IT-related interactions between employees, reposted questions, jokes, memes, and everything in between. All of this makes understanding in-channel messages much more difficult than those sent in a direct, employee-to-bot conversation.

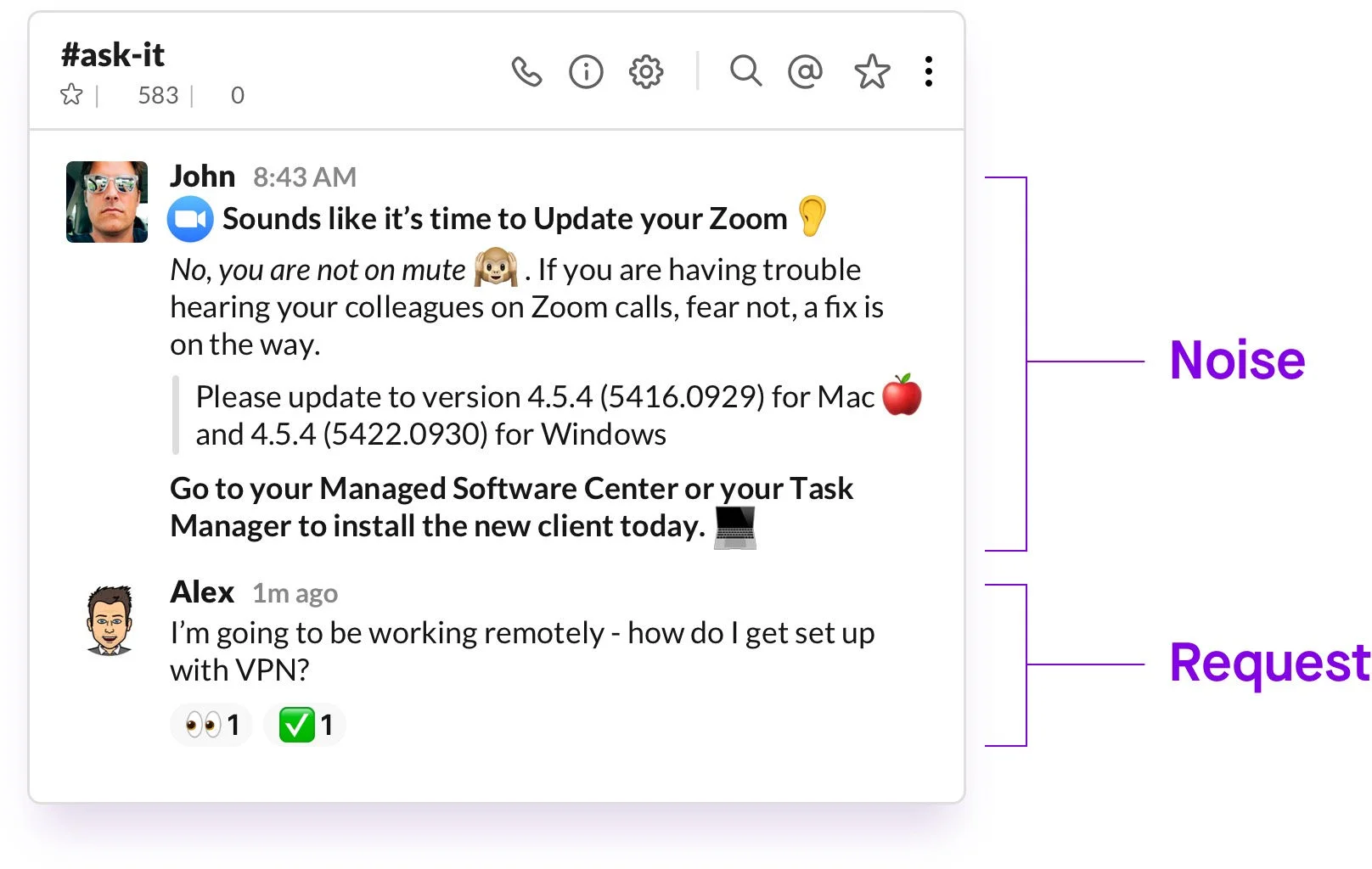

To illustrate the point, consider this example of signal intermixed with noise — a broadcast announcement followed by an actual employee issue:

Figure 3: Separating the signal from the noise is intuitive for humans, but profoundly difficult for machines.

Figure 3: Separating the signal from the noise is intuitive for humans, but profoundly difficult for machines.

The overarching challenge here is that the structure of messages, comments, and responses is unpredictable. Our NLU system needs to be able to parse these complex utterances and provide users with the right answers when needed, an objective that requires many machine learning models to achieve.

Resolve, route, or disregard: Determining the right response

As we’ve seen, channels are not a good place for trigger-happy bots. Given the public nature of the channel setting, it’s critical for a bot to jump in only when it’s confident it can resolve an employee issue. The machine learning model thresholds for in-channel responses must therefore be tuned to trigger at higher confidence level than for DMs.

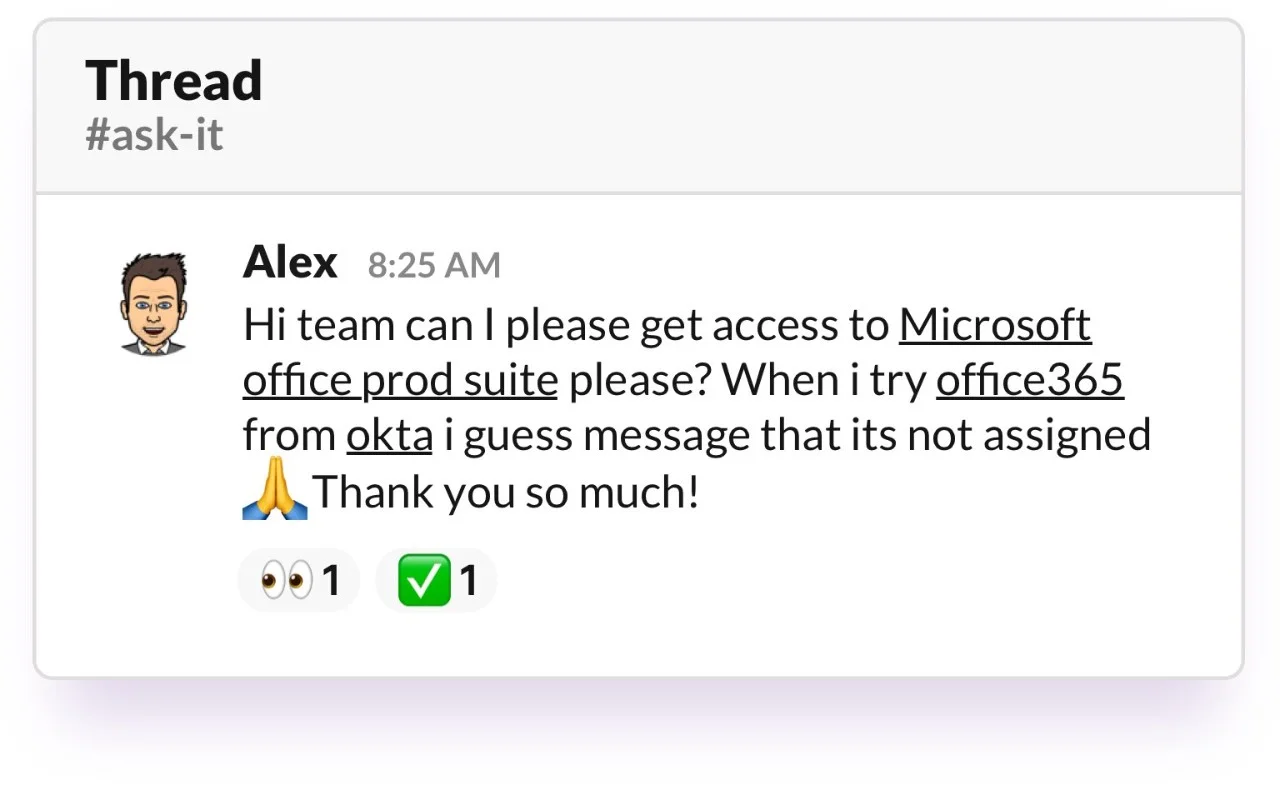

So how does Moveworks actually decide which, if any, response is appropriate for each employee question? As we’ll examine at length in an upcoming blog series, our conversational AI platform was created explicitly to address the shortcomings of historical chatbots that relied on hard-coded decision trees. In the figure below, for example, a hard-coded model focused on keywords could easily misclassify the request for Microsoft Office as a problem with Okta:

Figure 4: A typical, noisy message in an IT support channel, filled with red herrings and irrelevant details that would confuse historical chatbots.

Figure 4: A typical, noisy message in an IT support channel, filled with red herrings and irrelevant details that would confuse historical chatbots.

By contrast, Moveworks’ probabilistic engine weighs a number of variables — including syntax, semantics, previous IT tickets from the company, and even the employee’s department — to tackle the nuances of natural human language. Each of our resolution skills, such as our Software Access skill, then “bids” on the right to resolve an employee’s issue, with higher bids corresponding to higher levels of confidence in the model’s prediction. In this case, the Software Access skill generates the only bid that exceeds the confidence threshold, prompting the bot to autonomously provision Microsoft Office.

Figure 5: Moveworks’ probabilistic engine uses an auction system to determine the best action.

Figure 5: Moveworks’ probabilistic engine uses an auction system to determine the best action.

When our bot can’t fully resolve an issue, it must seamlessly hand off to the right person on the service desk. We met this goal by developing a new system of interaction that allows Channel Resolver to provide the necessary information for agents to step in and pick up where the bot left off, facilitating faster response.

Changing the channel with AI

Figure 6: For employees awaiting IT support, Channel Resolver turns days into seconds.

Figure 6: For employees awaiting IT support, Channel Resolver turns days into seconds.

For the first time, employees can get instant resolution directly in the IT support channel — from software provisioning to account unlocks to installation instructions to the guest WiFi password. And for IT teams, Channel Resolver means that Ask-IT channels no longer require an army of agents to maintain. The verdict is clear: it’s time to supercharge your Slack workspace with AI.

Contact Moveworks to demo and deploy Channel Resolver on your IT support channel.