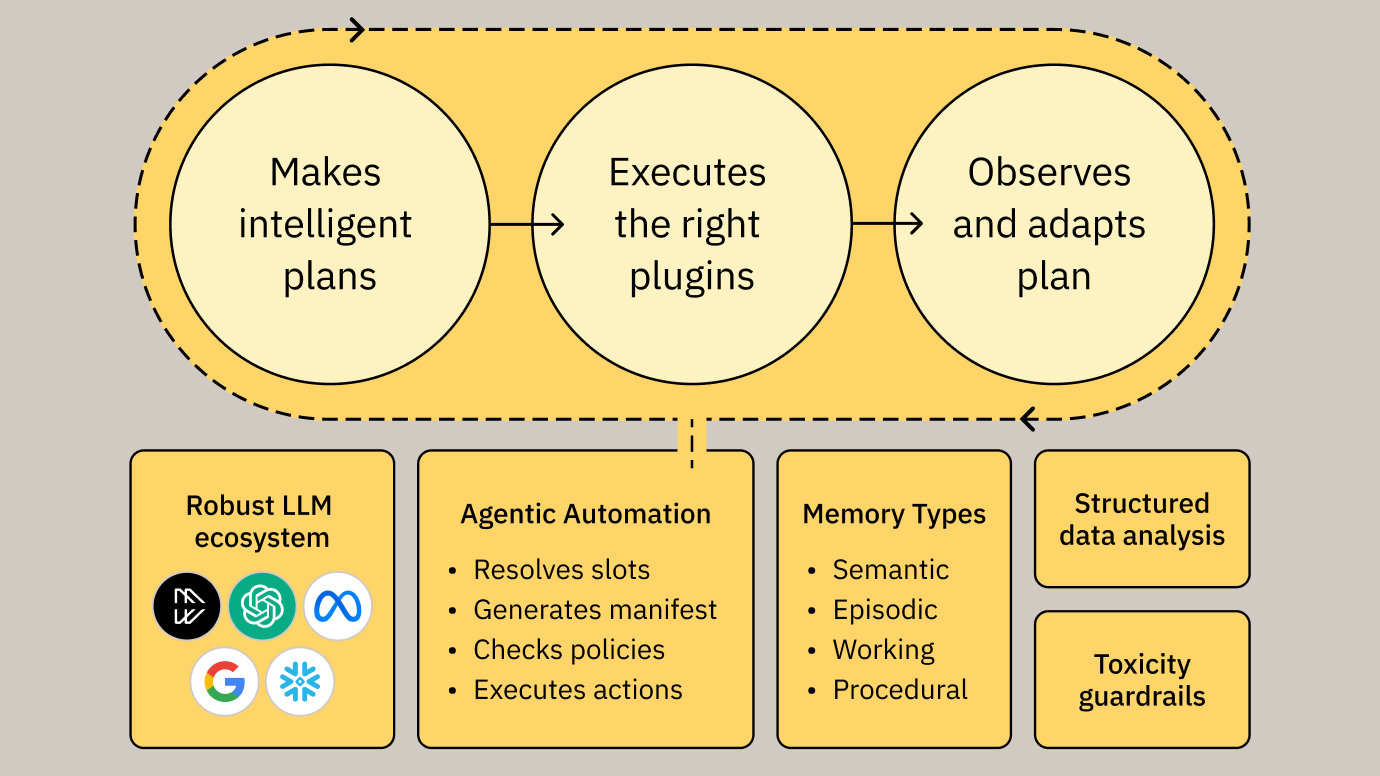

Our agentic platform leverages tested LLMs and our own Reasoning Engine to remove friction from the employee work day.

- Text 1

MOVEWORKS’ VISION

AI that adapts to the evolving needs of modern businesses

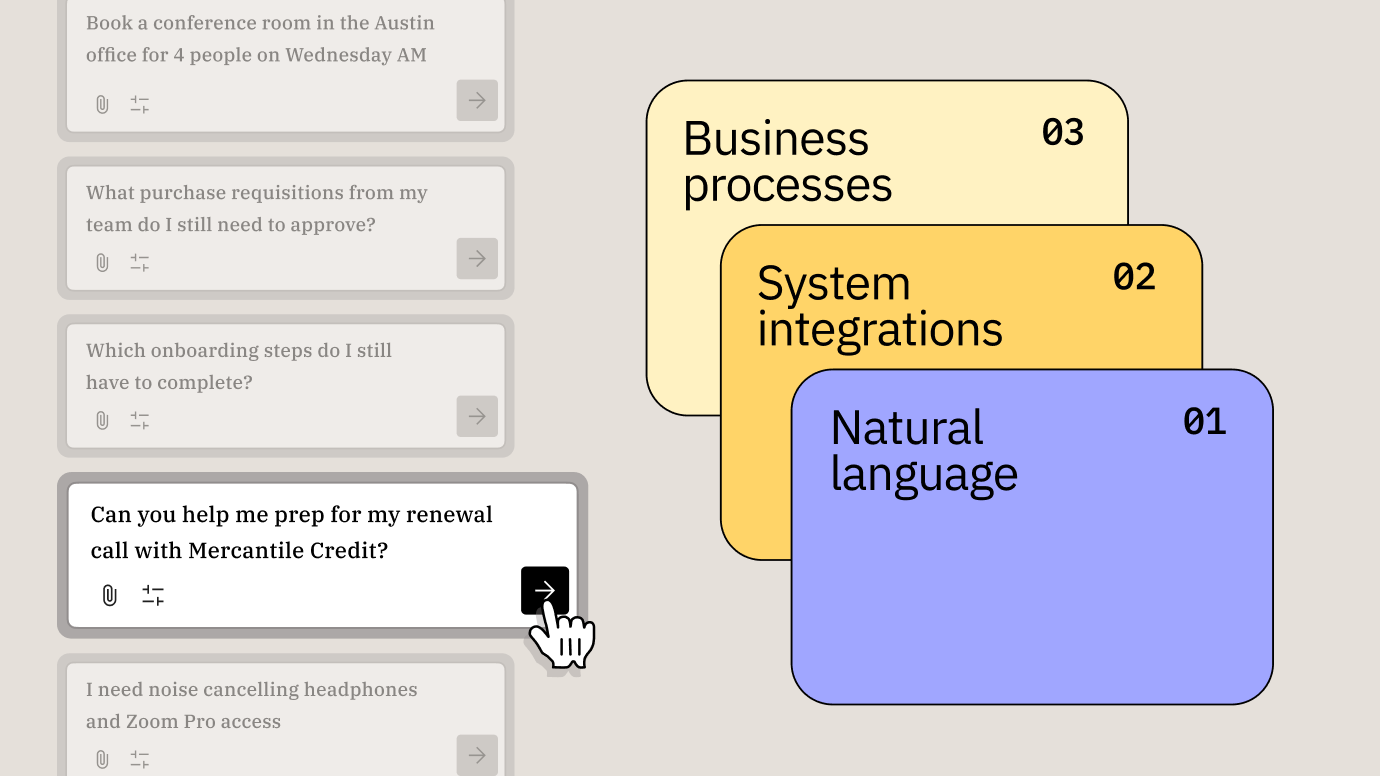

AI THAT FLOWS WITH INFORMATION

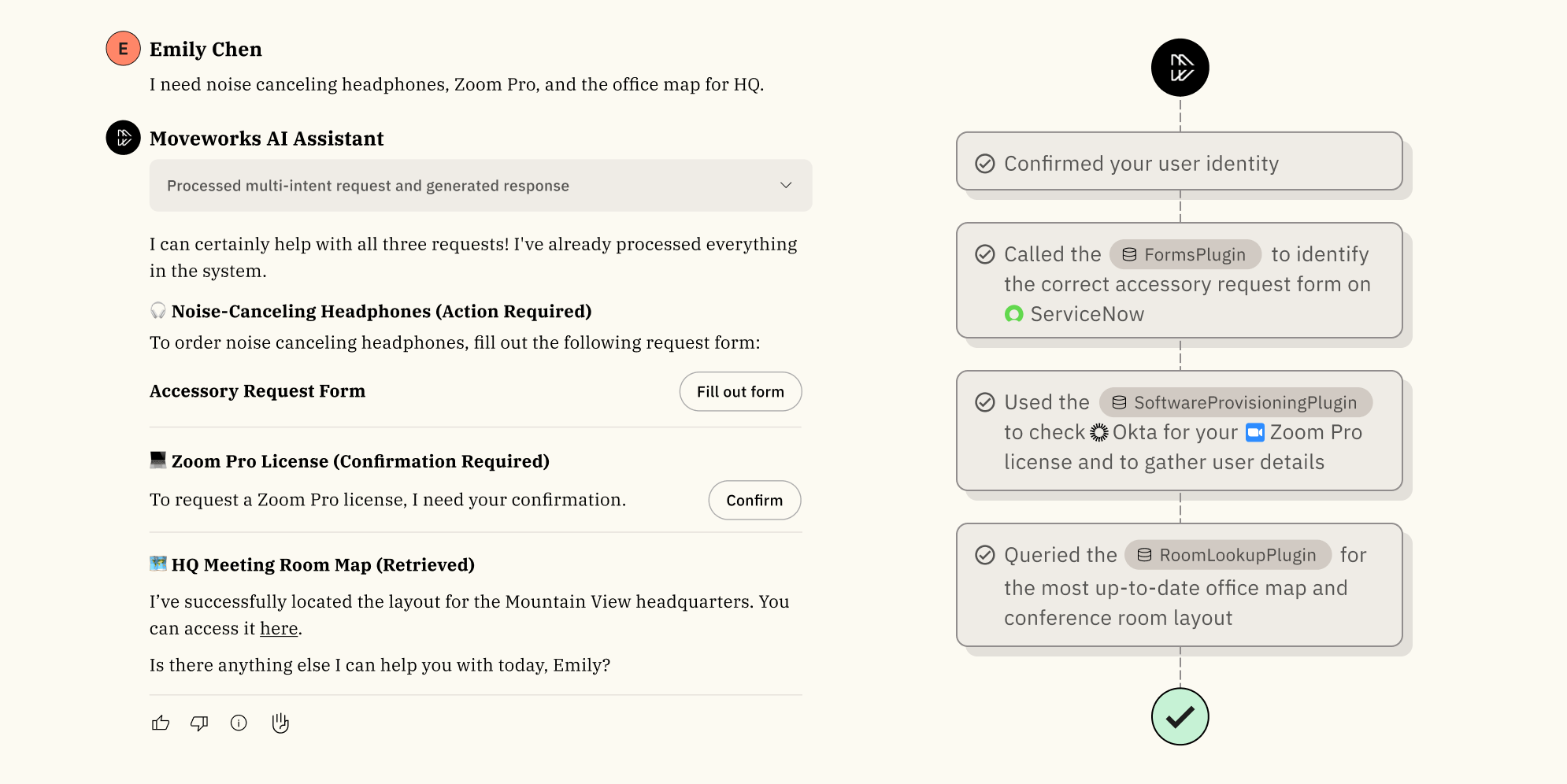

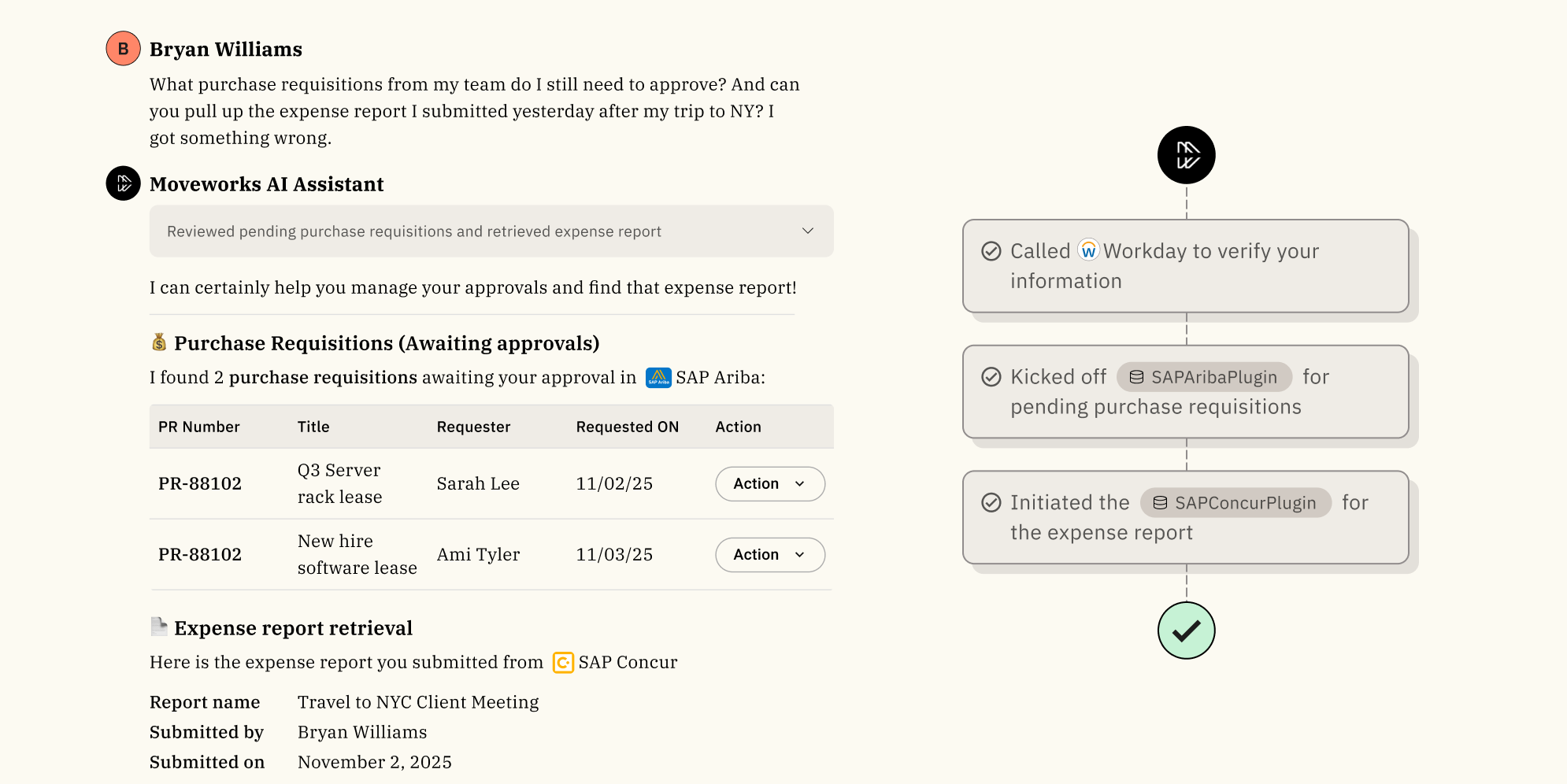

Drive end-to-end workflows from natural language

From interpreting queries to activating plugins and generating artifacts, our agentic architecture turns employee input into tangible business outcomes.

CONTINUOUS ARCHITECTURAL IMPROVEMENTS

Harness the benefits of rapidly improving AI

Our agentic platform leverages tested LLMs and our own Reasoning Engine to remove friction from the employee work day.

BRING NEW AI AGENTS TO LIFE

Unified extensibility experience

Our platform empowers developers to build and securely extend AI agents across the enterprise.

RESHAPE YOUR BUSINESS FUTURE

Transform your workforce with Moveworks’ agentic AI

Moveworks’ AI delivers agentic workflow design, advanced automation, and a growing plugin ecosystem to scale your business operations.

Do more with less by leveraging AI that uplevels employee experience across every department

Modernize your service desk to resolve routine issues instantly

- Automate incident triage to reduce service downtime

- Accelerate software provisioning for optimizing productivity

- Orchestrate workflow execution via form completion

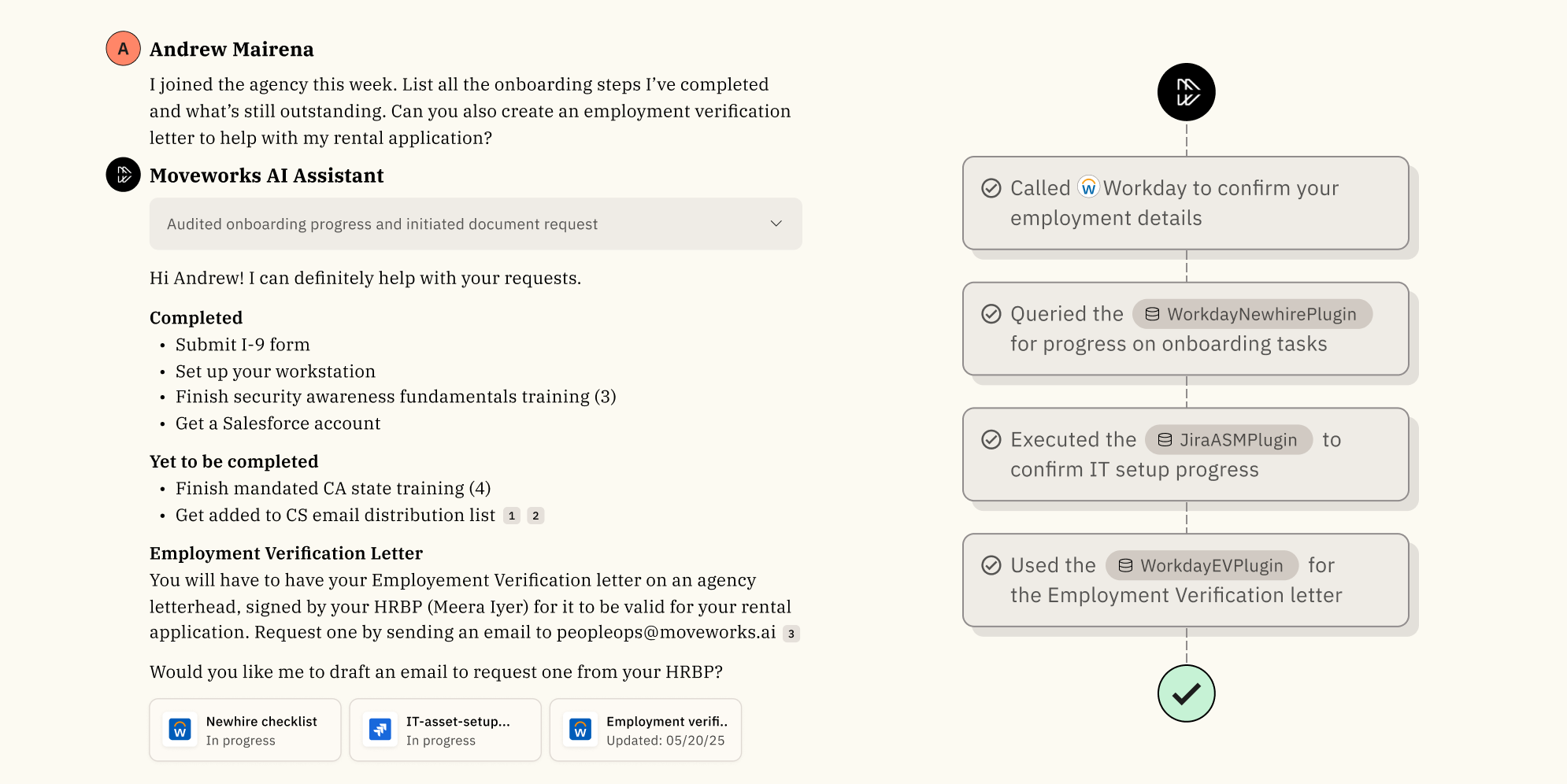

Deliver instant HR support througout the employee lifecycle

- Streamline the onboarding process for new agency employees

- Simplify everyday HR operations for every employee in every department

- Drive retention with easy access to book time off, benefits, and recognition sharing

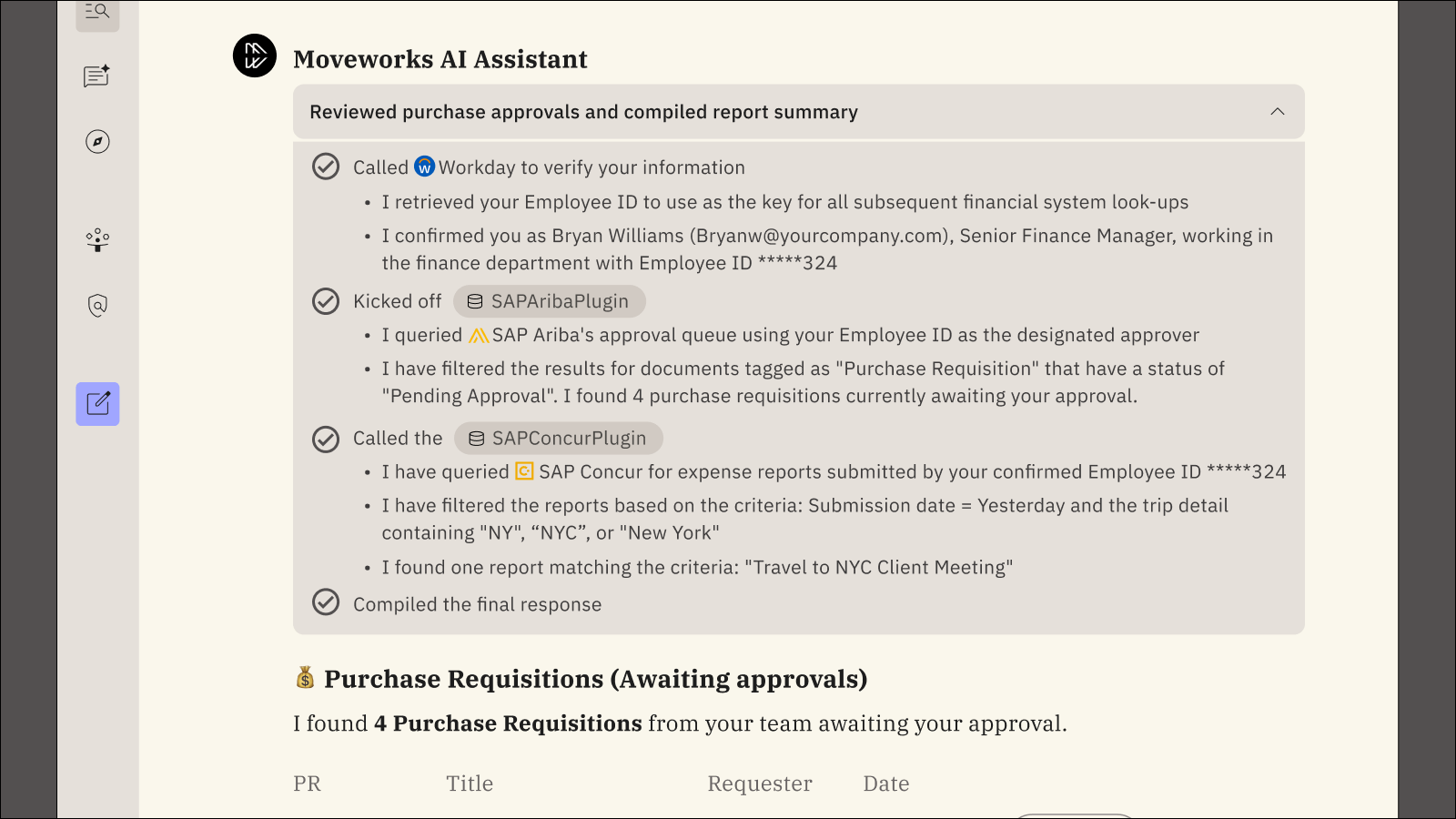

Uplevel your finance operations and reduce repetitive tasks

- Ensure accurate payments through automated billing inquiries

- Streamline expense reporting for faster employee reimbursements

- Accelerate procurement cycles with instant-order tracking across systems

Automate building requests and make facility usage easier

- Optimize workspace utilization with seamless and automatic room booking

- Accelerate response times for building maintenance requests

- Simplify badge management and facilitate secure building access

AI that meets all your operational needs — all in one platform

OUT-OF-THE BOX INTEGRATIONS

Integrates with all your key applications and systems

Seamlessly integrating with today’s most business-critical apps, Moveworks enables every employee to find the right answers right away and automate governed tasks securely across systems.

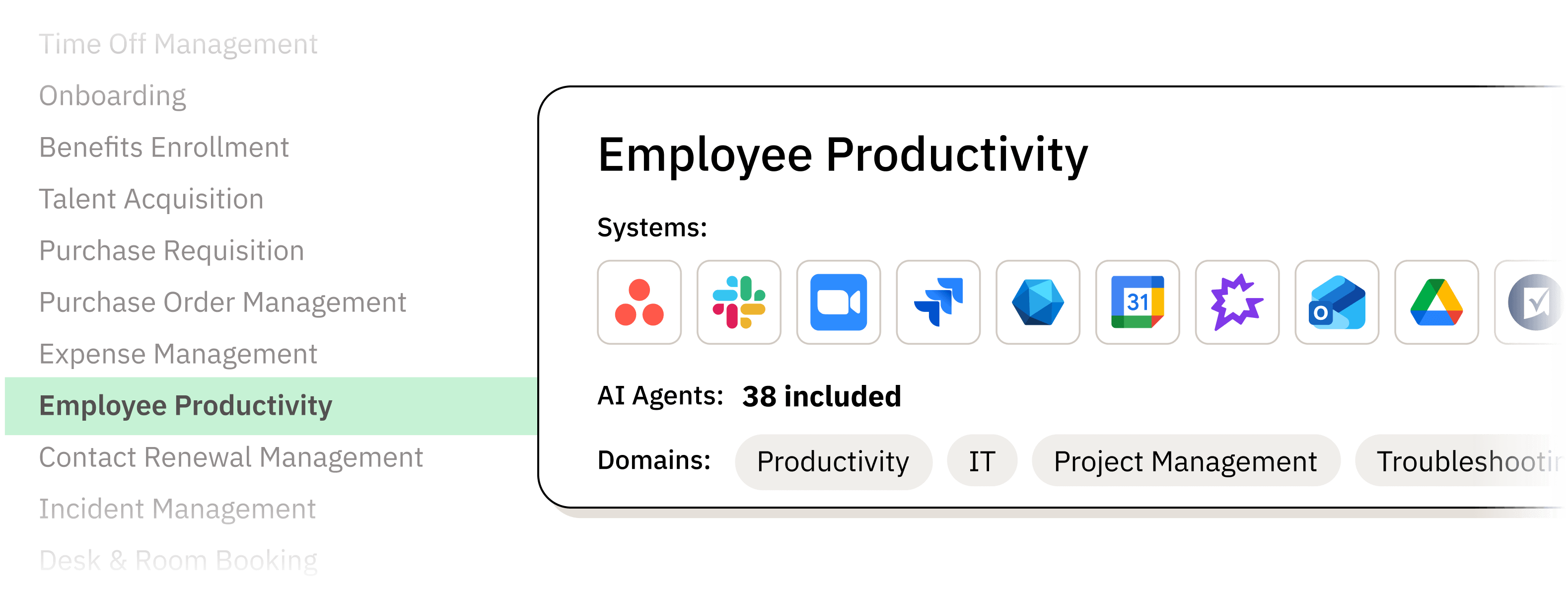

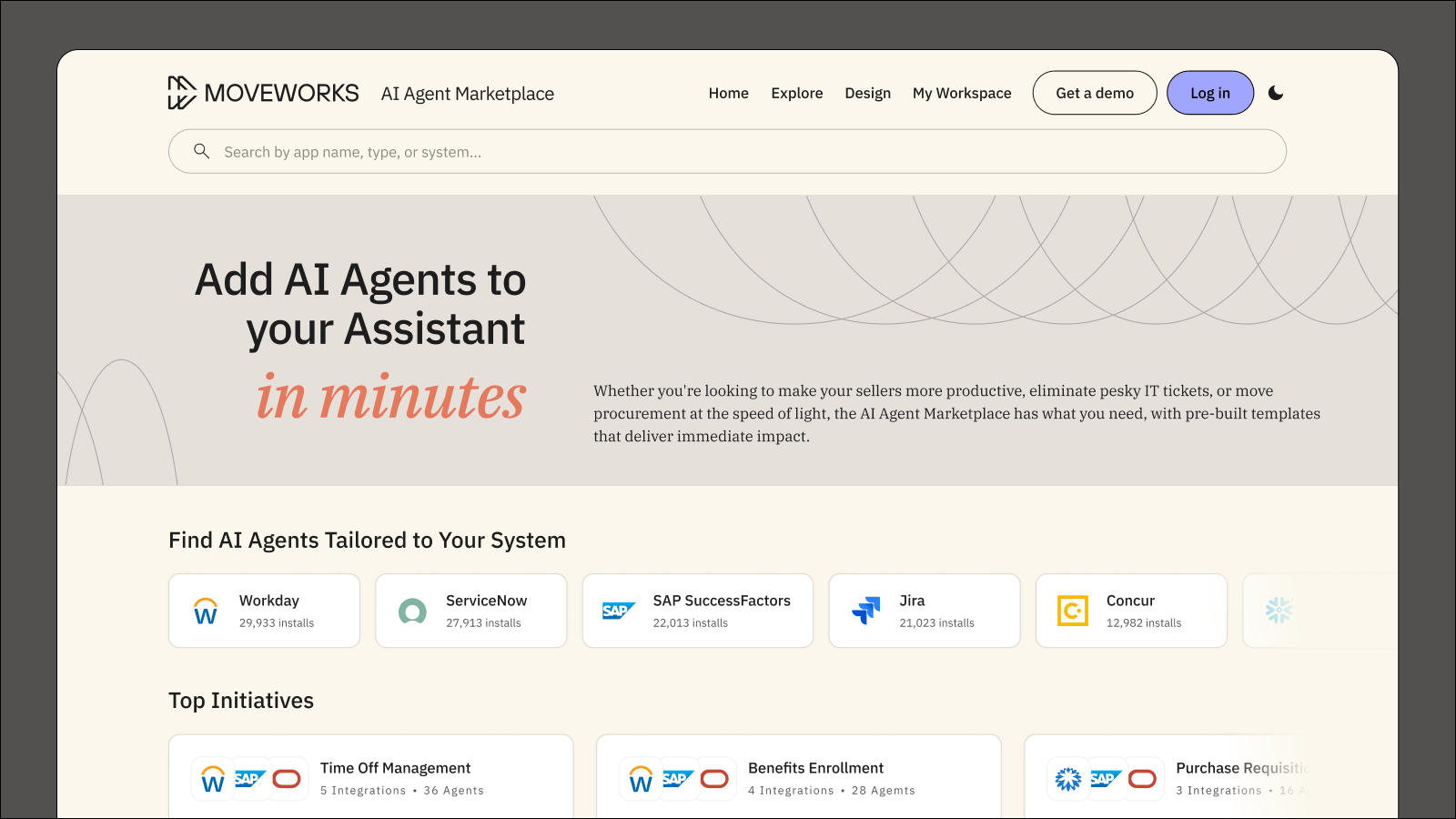

BUSINESS INITIATIVES

Curated sets of AI agents to power enterprise demands

AI Agent Marketplace allows any enterprise organization to easily deploy a series of AI Agents to address common use cases while Agent Studio enables customizations for specialized workflows.

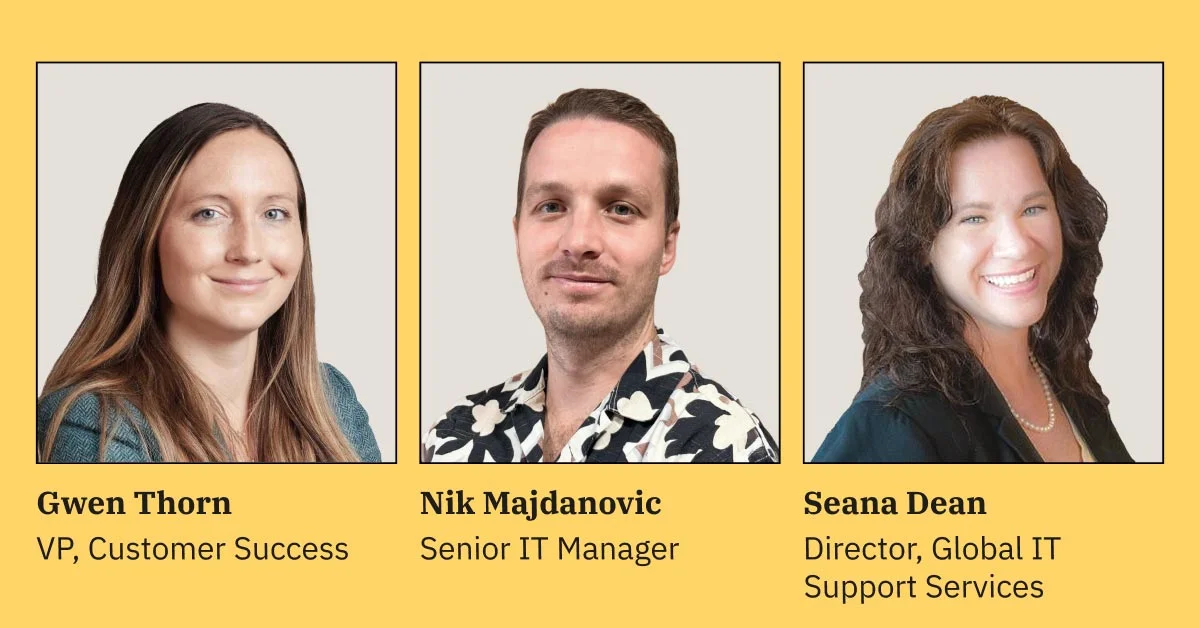

Success stories and statistics that speak for themselves

"Moveworks is an outstanding chatbot and Agentic AI software platform that allows for targeted resolution and support to the end user."

Executive Director, Enterpirise Solutions and Automation in the Healthcare and Biotech Industry gives Moveworks Platform 5/5 Rating in Gartner Peer Insights™ Artificial Intelligence Applications in IT Service Management (Transitioning to AI Applications in IT Service Management) Market. Read the full review here: https://gtnr.io/UWebMCNdK #gartnerpeerinsights

Disclaimer: Gartner® and Peer Insights™ are trademarks of Gartner, Inc. and/or its affiliates. All rights reserved. Gartner Peer Insights content consists of the opinions of individual end users based on their own experiences, and should not be construed as statements of fact, nor do they represent the views of Gartner or its affiliates. Gartner does not endorse any vendor, product or service depicted in this content nor makes any warranties, expressed or implied, with respect to this content, about its accuracy or completeness, including any warranties of merchantability or fitness for a particular purpose.

Deploy a production-grade agentic AI Assistant to all employees

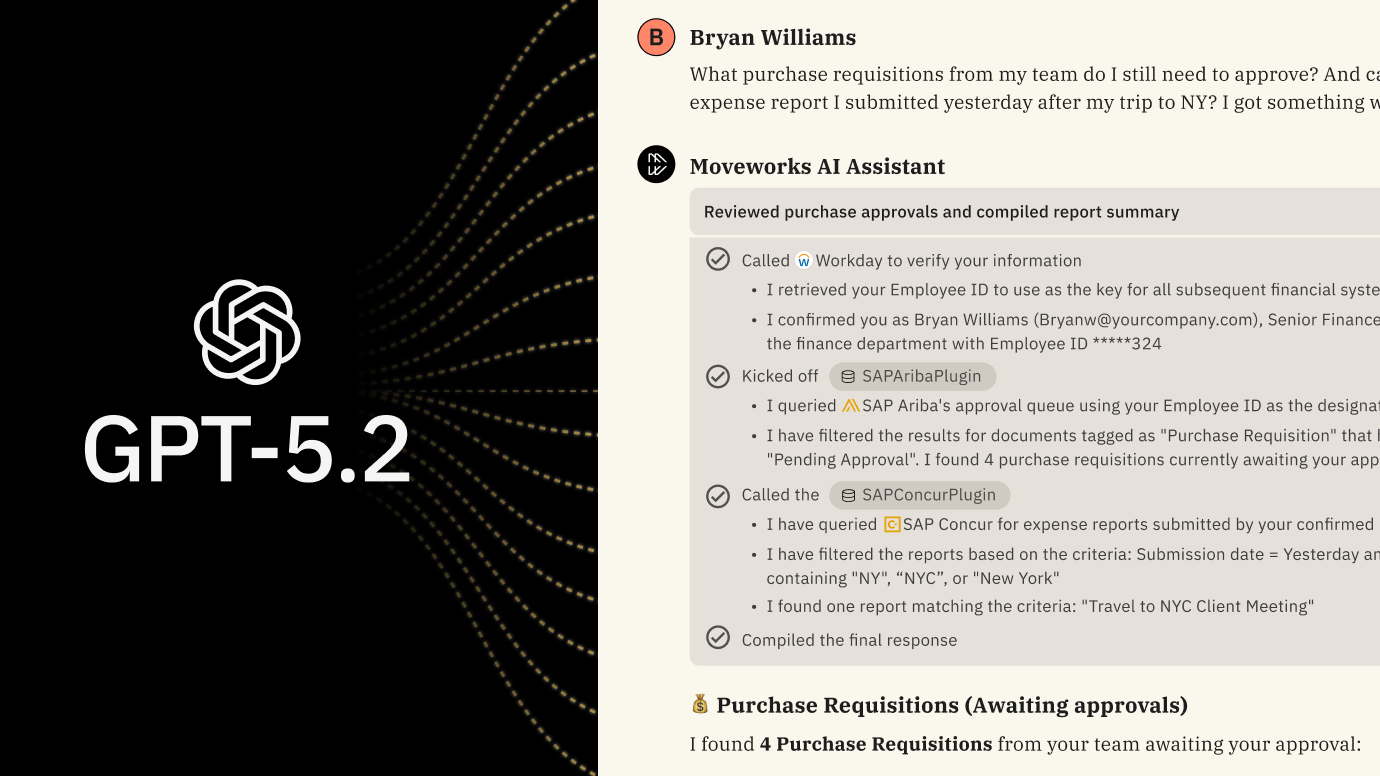

Cutting-edge LLMs

Built on the world’s most advanced LLMs from providers like Open AI, Moveworks Reasoning Engine iteratively finds the best solution for every employee issue.

Knowledge grounding

Always have peace of mind and feel confident that every response is governed by role, secure by design, and backed by your company’s data. Answers include inline citation links to source content.

Robust evaluations

Our expert annotators — both human and AI — continuously measure the performance of every production model and Moveworks AI Assistant performance overall to guide ongoing enhancements.

Fine-tuned models

Rely on our latency-optimized, in-house search and NLU models, fine-tuned on proprietary data and hosted on our proprietary infrastructure for the best speed, scalability, and seamless upgrades.

Entity grounding

You can employ a rich representation of all your company’s specific acronyms and other named entities to most effectively address AI Assistant queries and search results in the context of your business.

Responsible AI

We protect your brand and data security with comprehensive safety and security guardrails. These advanced protocols prevent the AI Assistant from engaging in toxic behavior or falling for dangerous direct or indirect prompt injection attacks.

Other ways agentic AI can elevate your entire organization

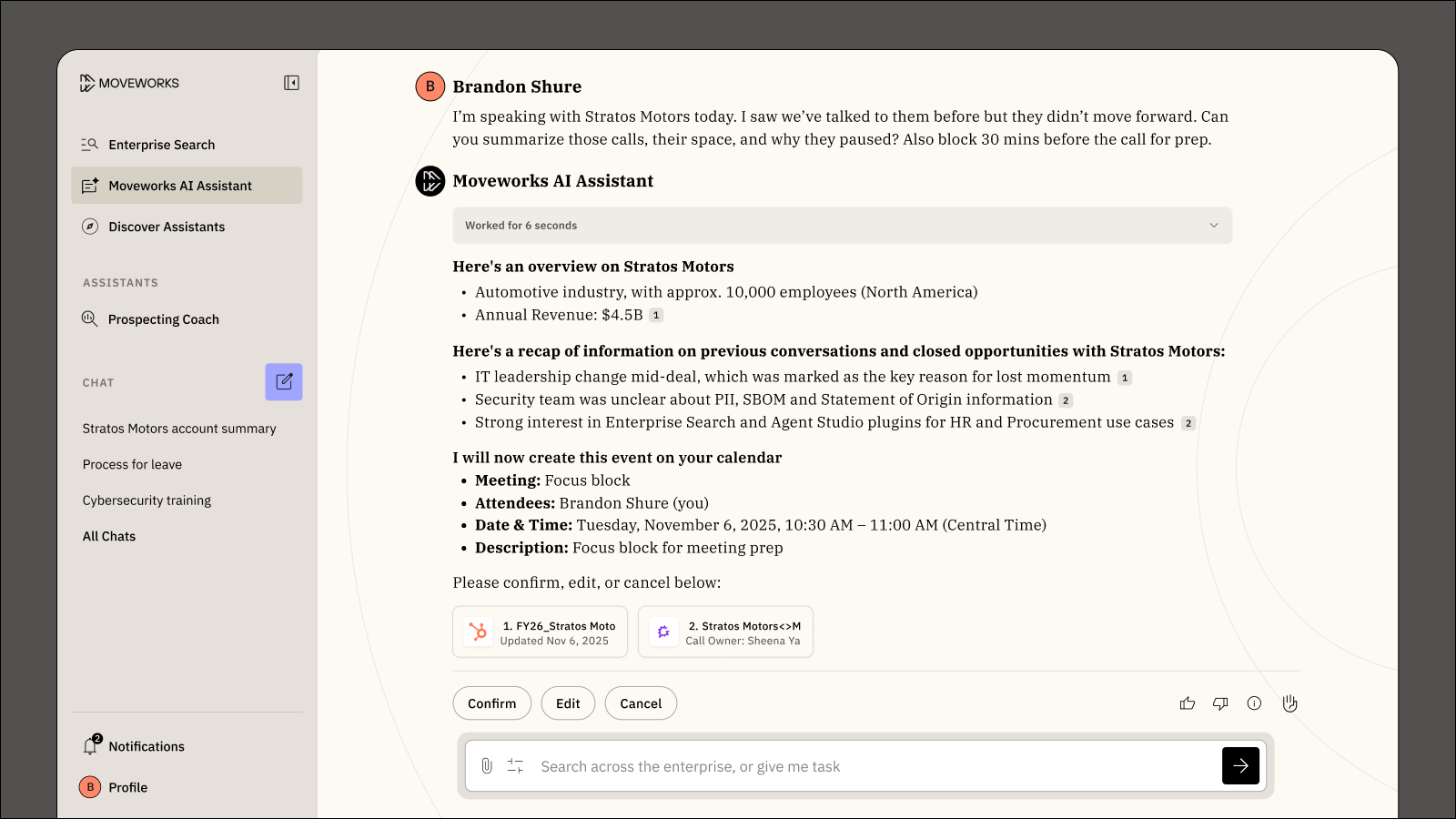

AI Assistant

Understands context, reasons like a human, and works across your existing apps for fast, accurate, and context-aware search with end-to-end task automation.

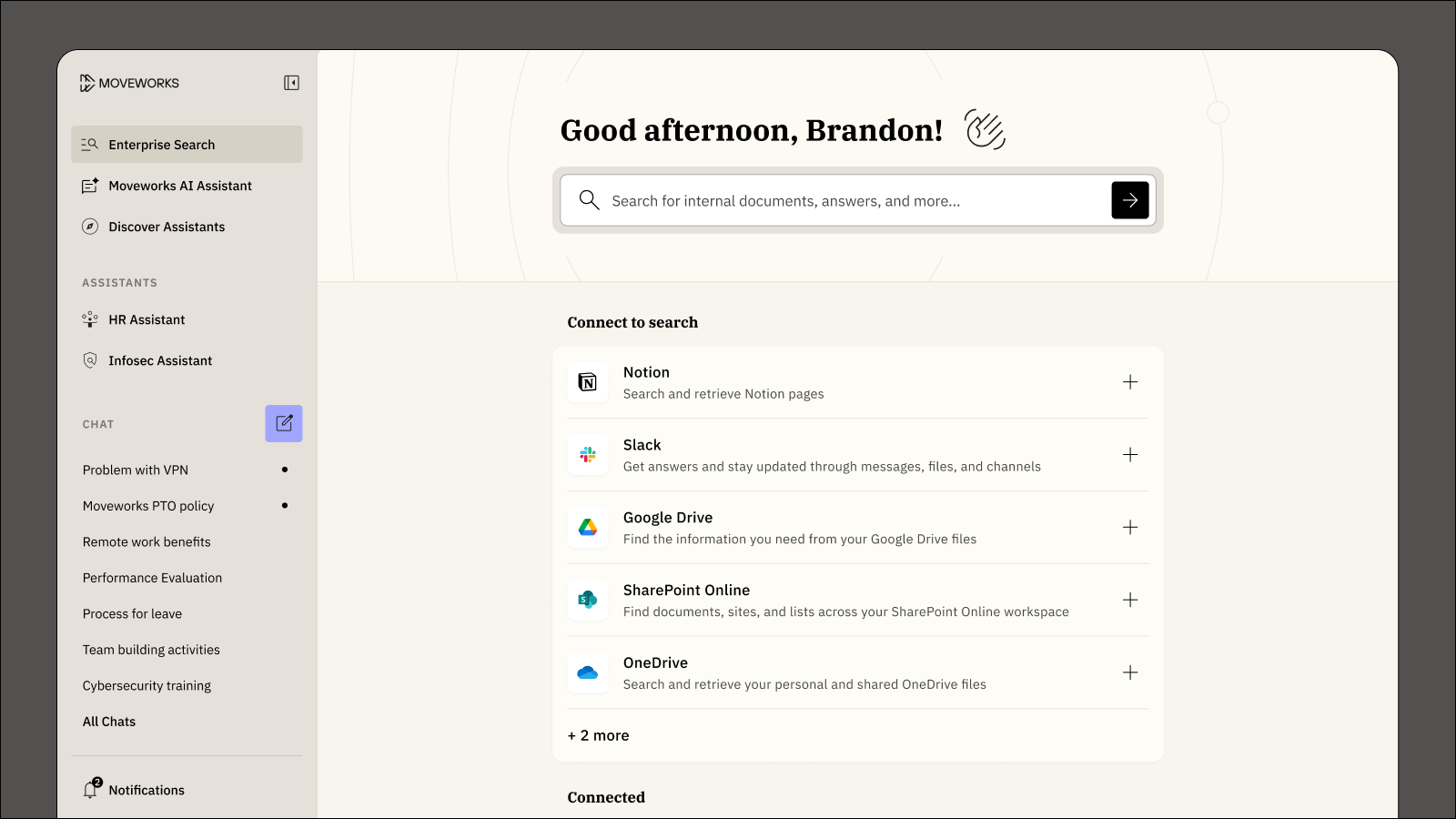

Enterprise Search

Find the right answers and easily pinpoint exact resources – no matter where it lives or what format it takes – in a search-optimized surface built for information discovery and retrieval.

Reasoning Engine

Selects the right tools to achieve every employee’s end goal, automating complex, multi-step processes reliably and efficiently with rigorous security protocols and real-time governance.

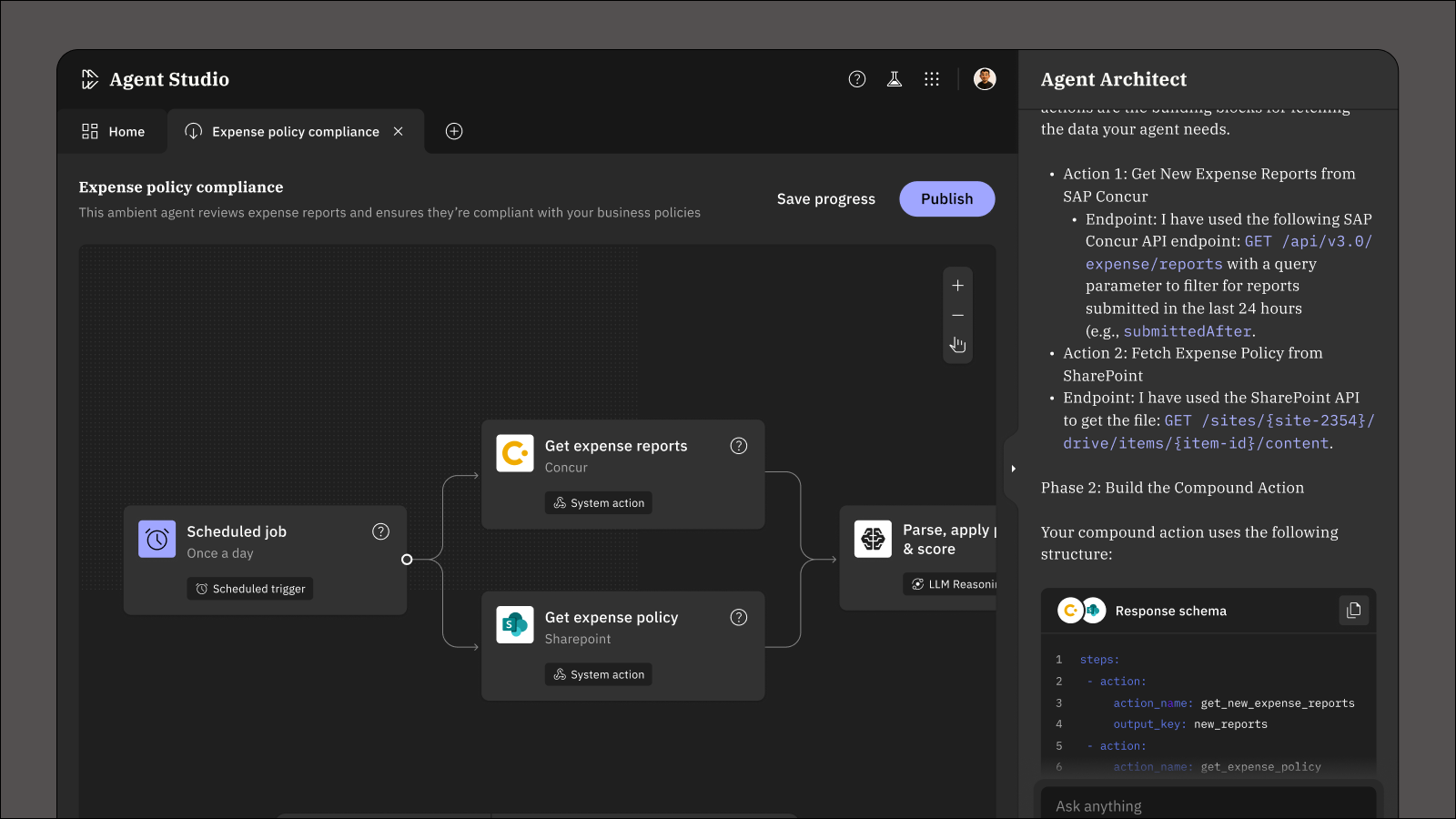

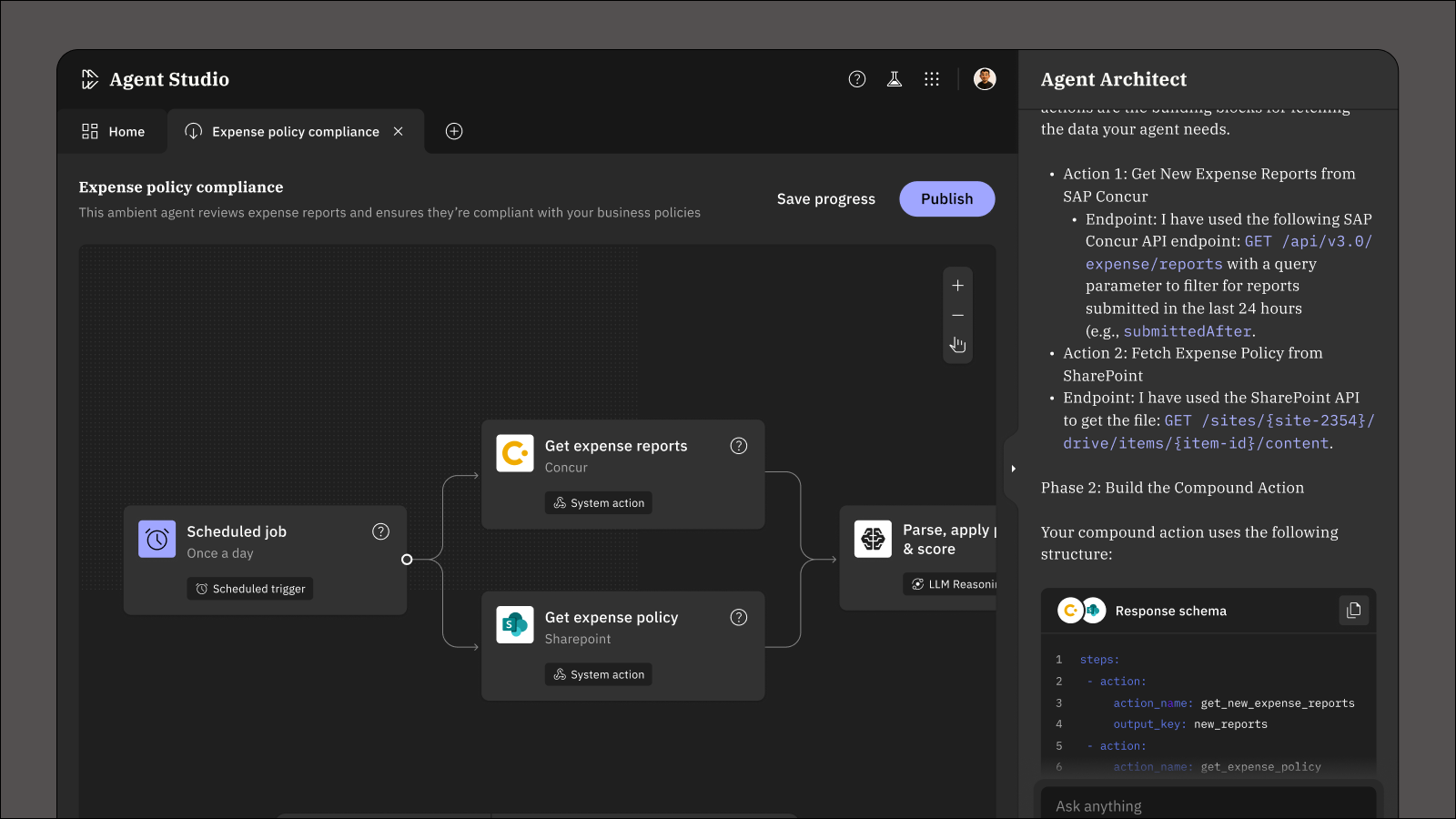

Agent Studio

Enables developers to easily build and deploy AI agents that plan, reason, and securely execute actions across systems to solve for almost any business need.

AI Agent Marketplace

Speeds up time-to-deploy with a curated library of ready-to-use, pre-built, production-proof AI agents that are customizable and free to install, providing quick connector setup and MCP.